A new study led by Western researchers reveals how the brain processes multi-sensory audiovisual information by developing a new 4D imaging technique, with time as the fourth dimension. The researchers targeted the unique interaction that happens when the brain is processing visual and auditory inputs at the same time.

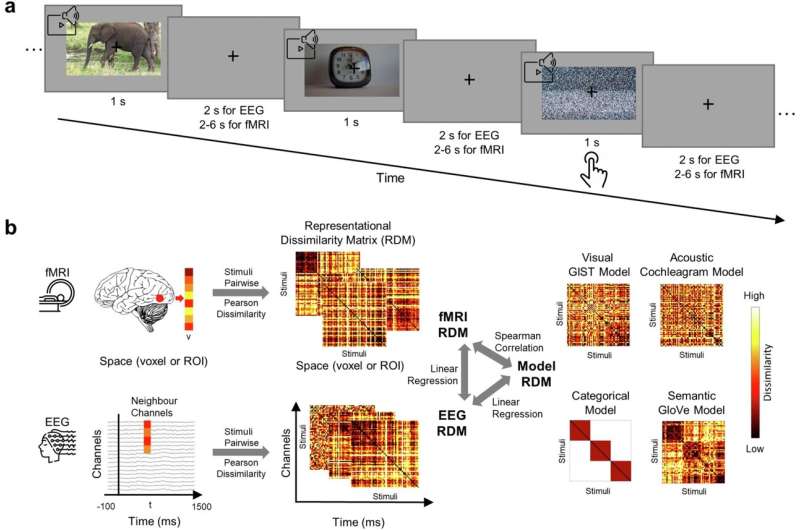

Computer science professor Yalda Mohsenzadeh and Ph.D. student Yu (Brandon) Hu used functional magnetic resonance imaging (fMRI) and electroencephalogram (EEG) on study participants to analyze their cognitive reactions to 60 video clips with corresponding sounds. The results revealed that the primary visual cortex in the brain responds to both visual and low-level auditory inputs, while the primary auditory cortex in the brain only processes auditory information.