Dyslexia, the world’s most common learning disorder impacting reading, spelling and writing, is estimated to affect up to 20% of the global population. Until now, traditional approaches to studying dyslexia, such as behavioral and neuroimaging methods, have provided valuable insights but remain limited in their ability to test the underlying mechanisms of reading impairments.

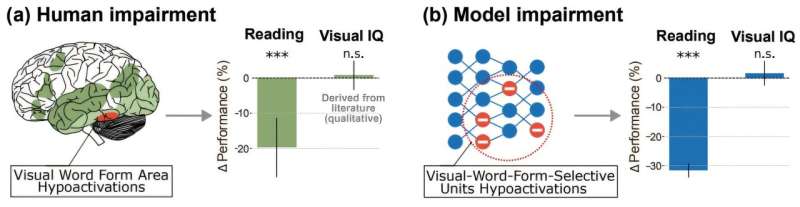

Now, researchers from EPFL’s NeuroAI Lab, part of the Schools of Computer and Communication Sciences and Life Sciences, have modeled dyslexia using next-generation Vision Language Models that can fully model the whole pipeline from seeing words to processing and understanding the context.